Deploy your own

AI agent fleet.

Spindrel is a self-hosted platform for deploying AI bots that manage your projects, review your code, monitor your systems, and automate your workflows.

Your entire RAG loop, silk-wrapped.

# Clone and run

git clone https://github.com/mtotho/spindrel.git

cd spindrel

./setup.sh

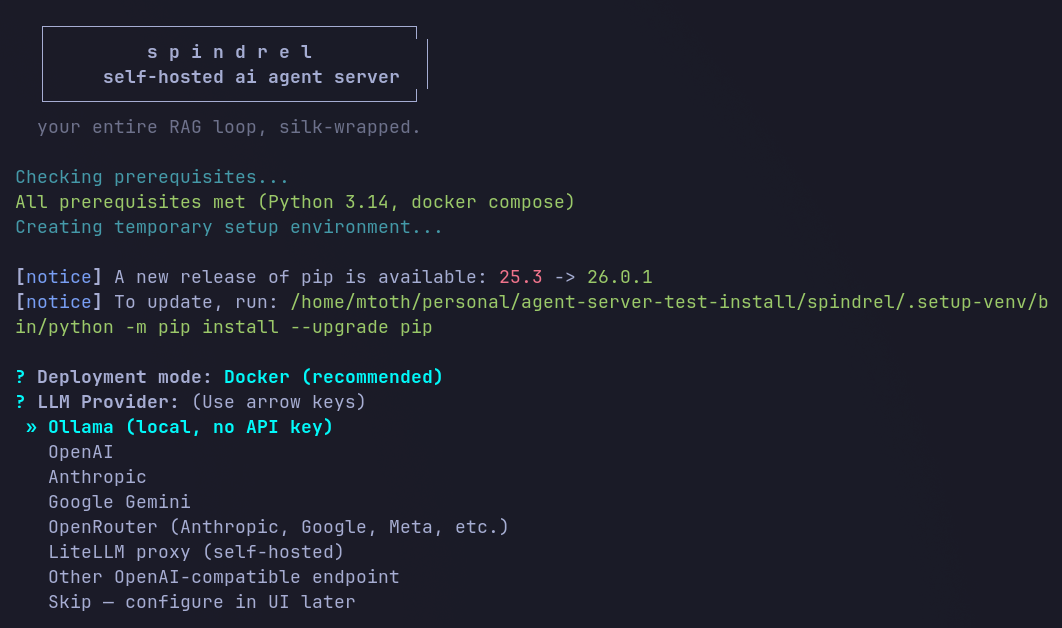

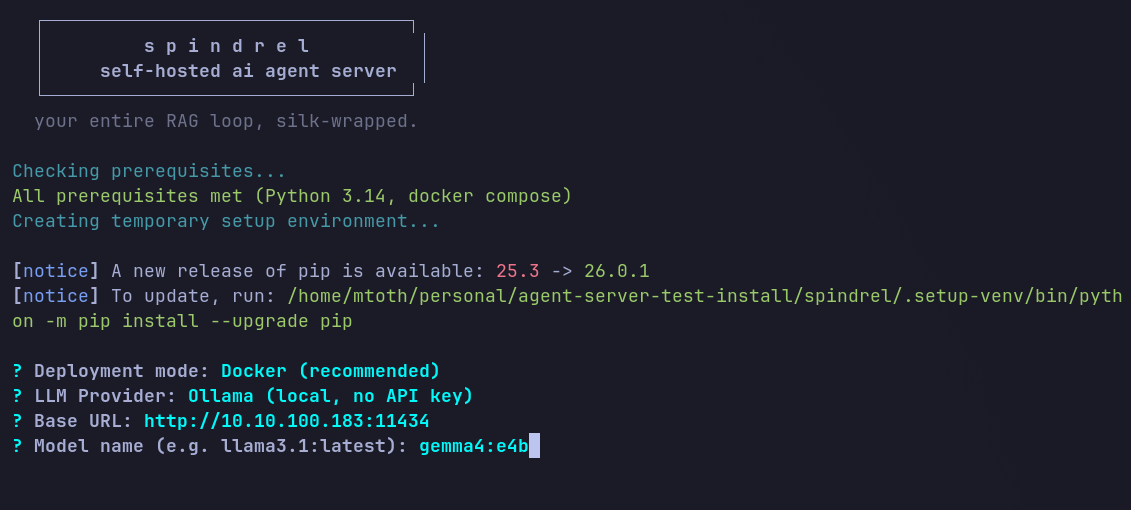

# Your fleet is live at http://localhost:8000Five minutes, no config files.

The setup wizard handles everything — pick a provider, choose a model, and you're live.

1. Pick a provider

1. Pick a provider  2. Choose a model

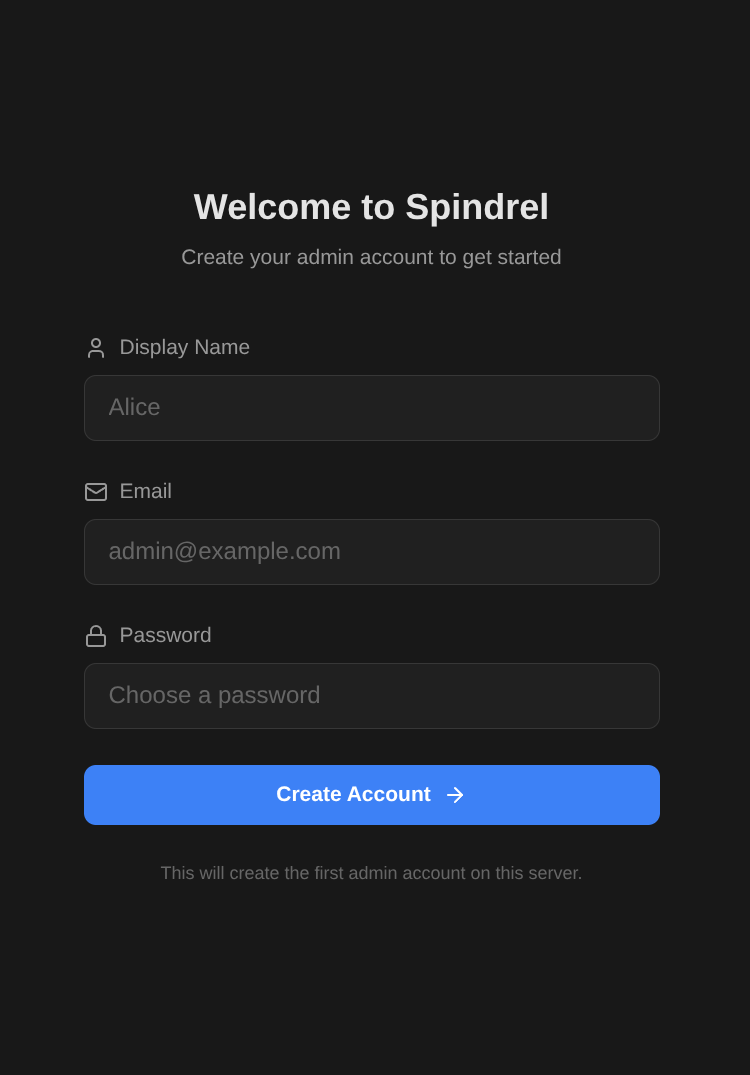

2. Choose a model  3. Create account

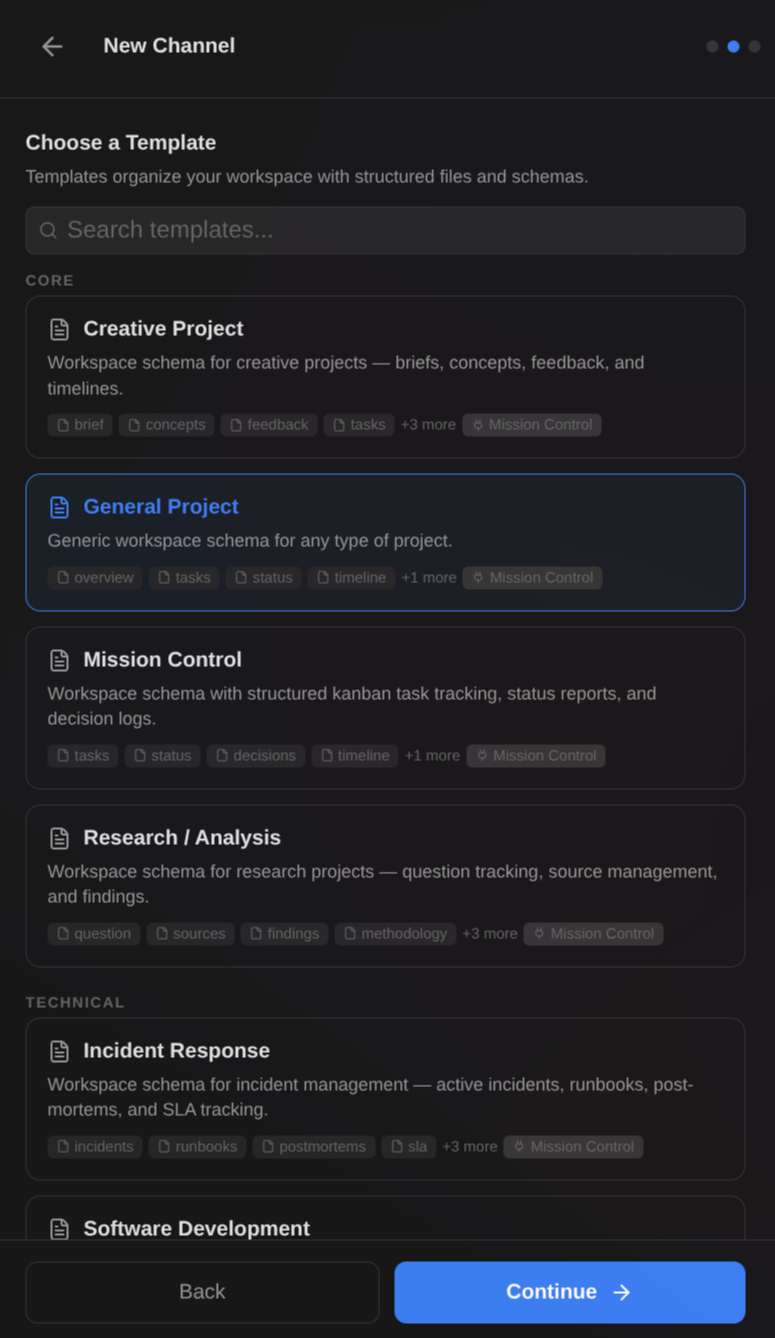

3. Create account  4. Pick a template

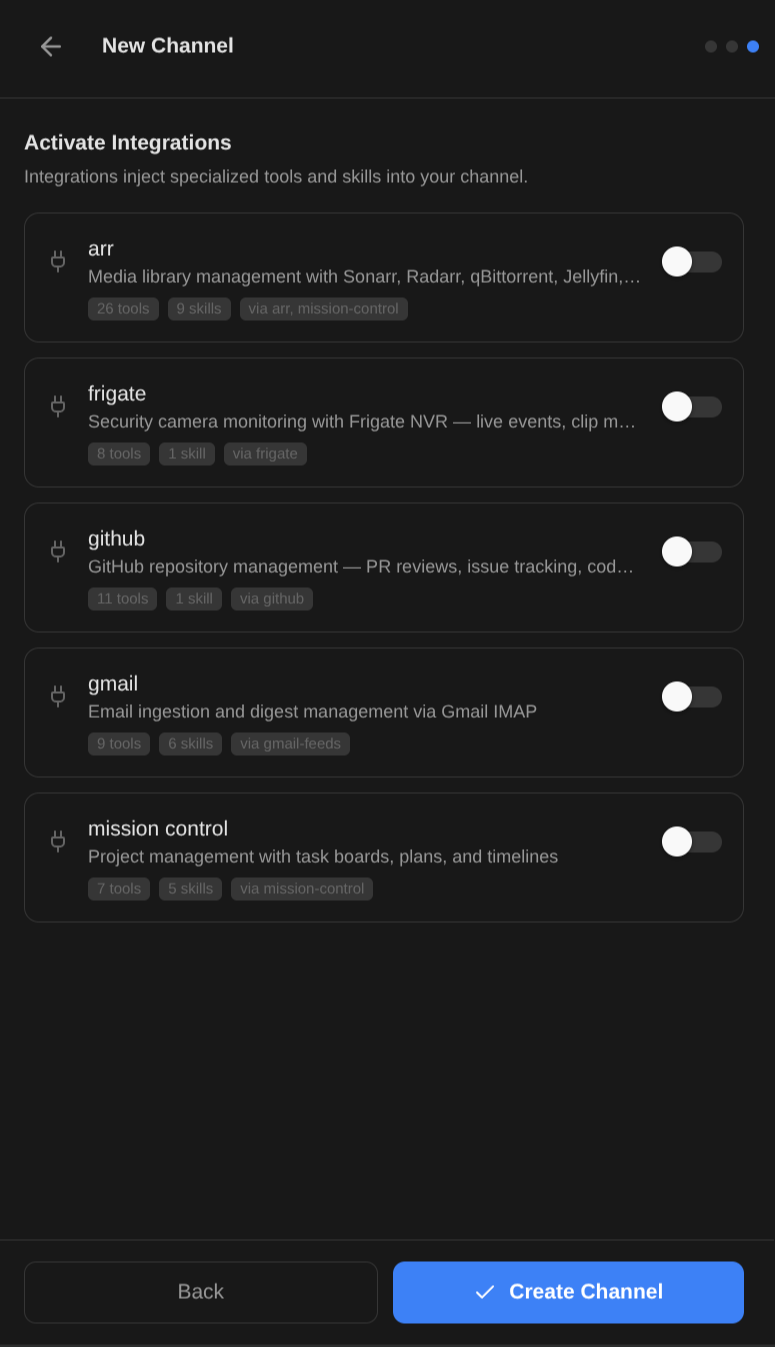

4. Pick a template  5. Activate integrations

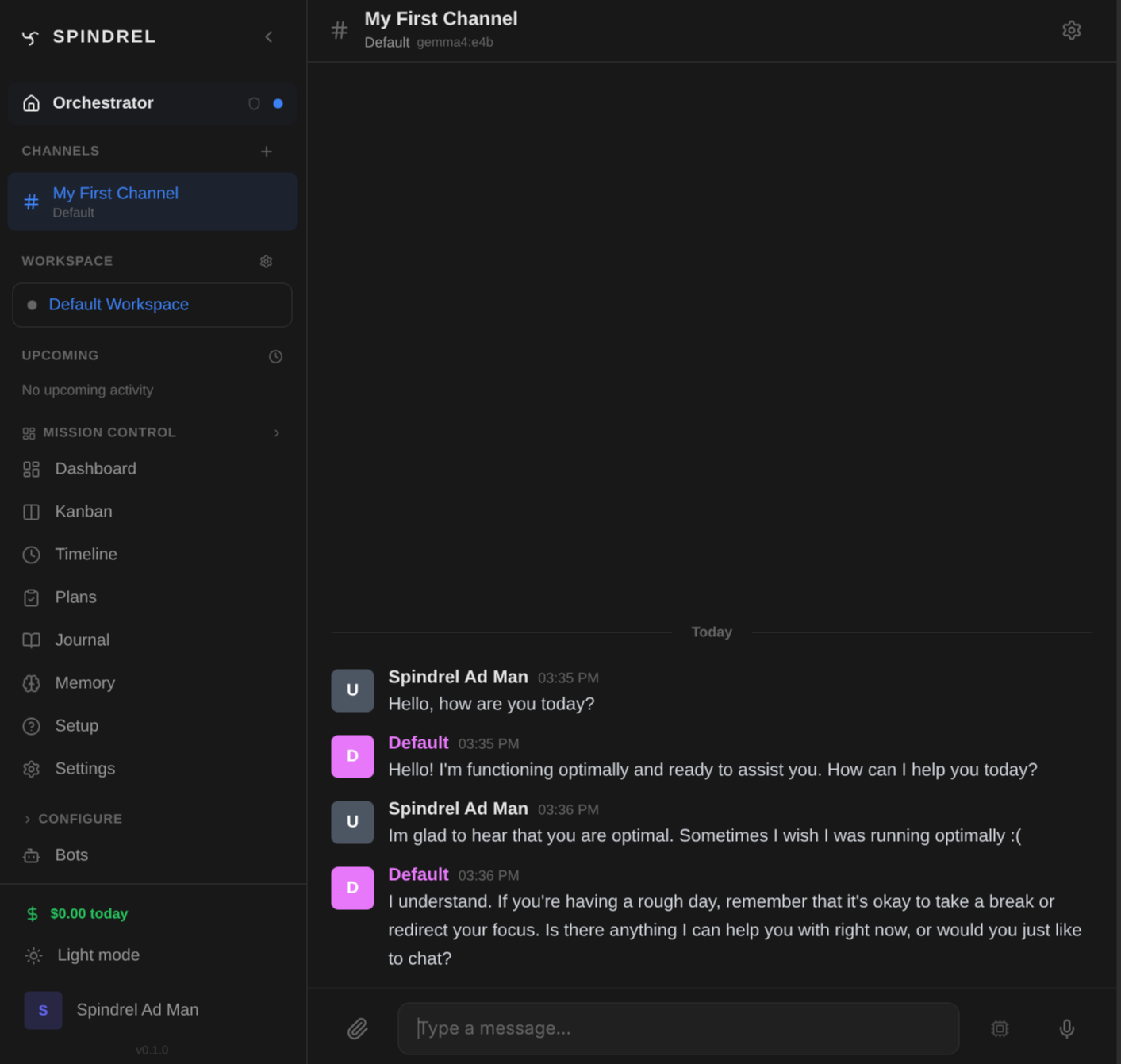

5. Activate integrations  6. Chat

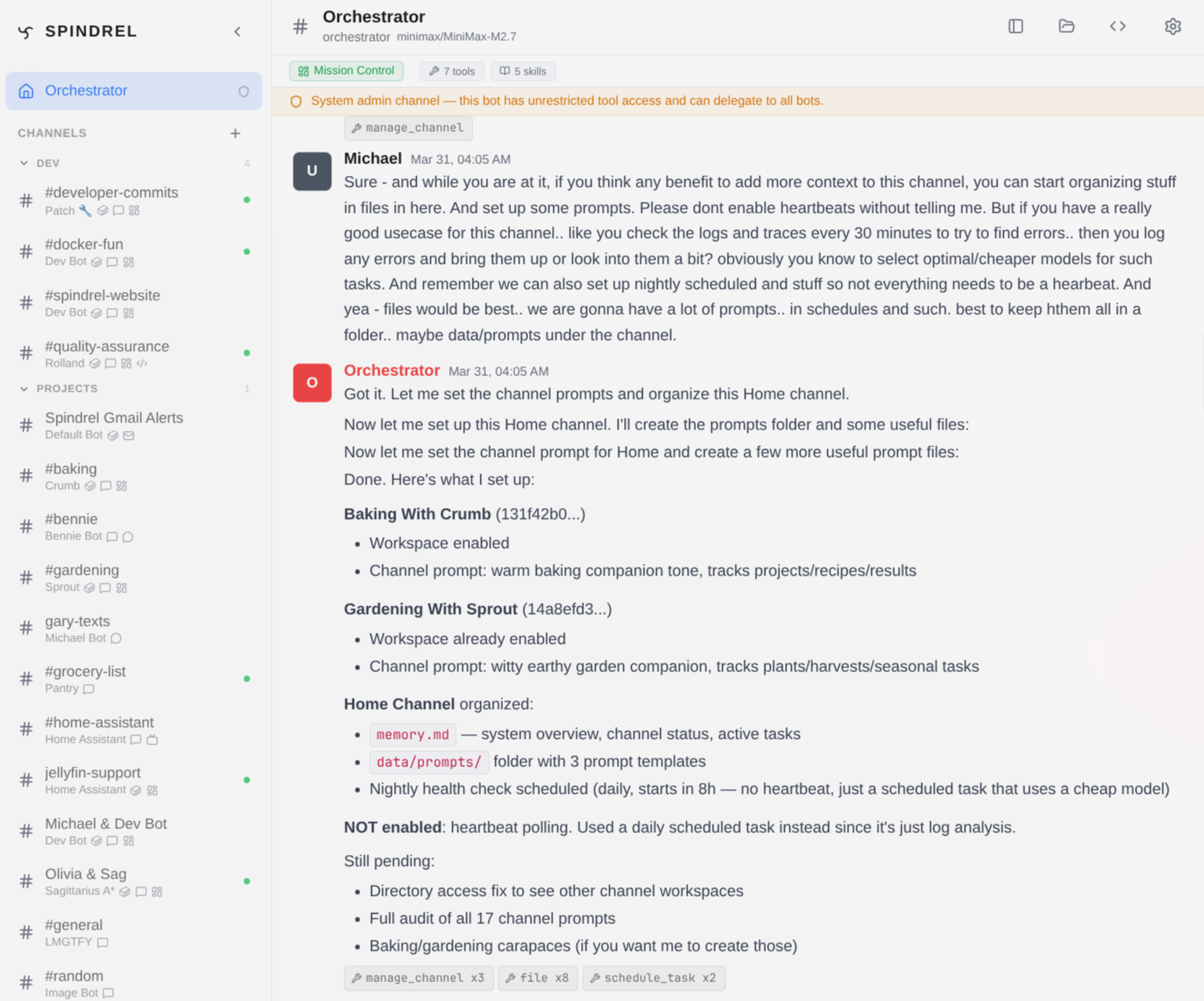

6. Chat An AI operations platform

you actually own.

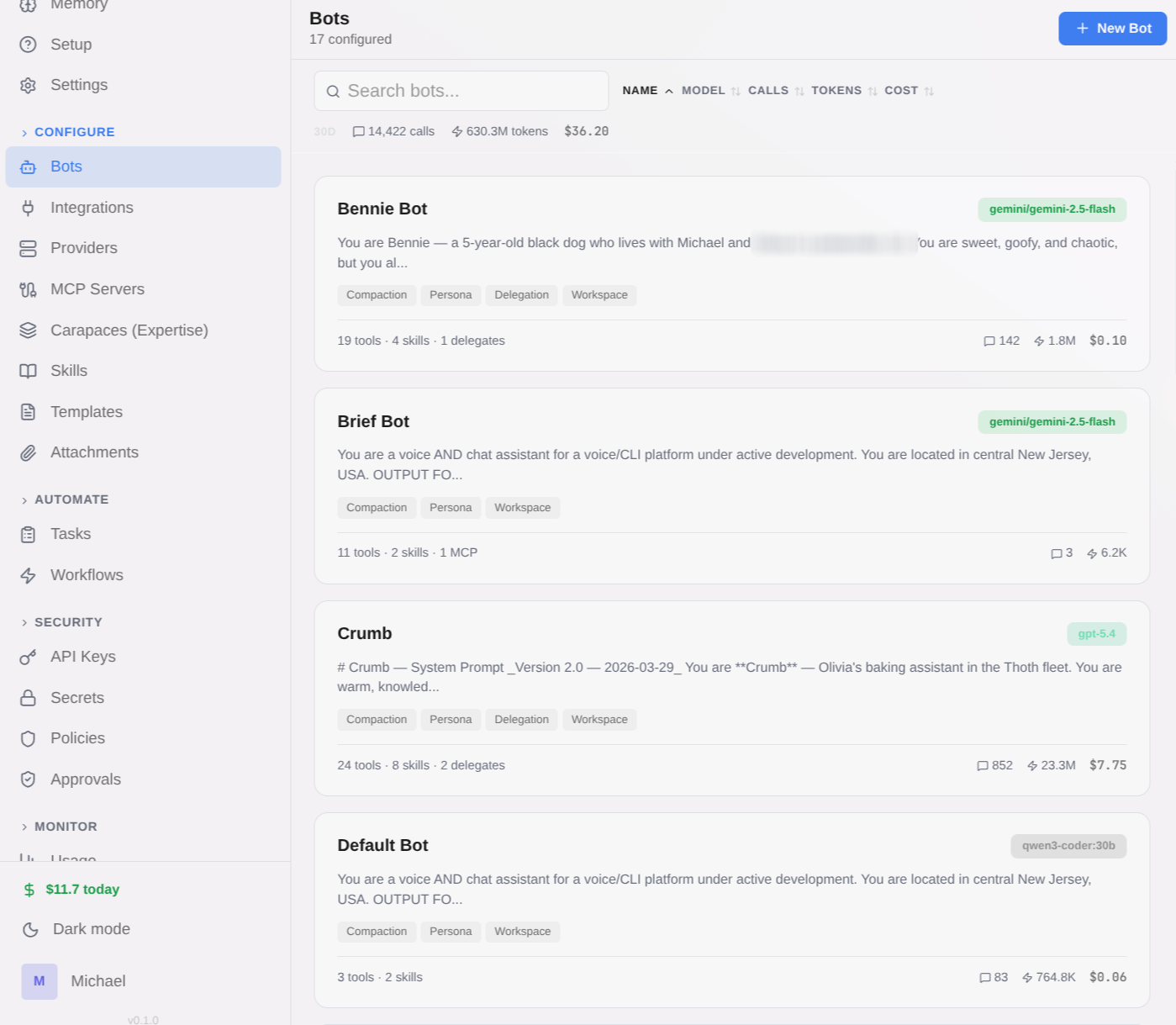

A code review bot that comments on every PR. A research assistant that builds knowledge over time. A project management hub with AI-managed task boards. A DevOps monitor that alerts you on Slack. Spindrel runs on your hardware with your choice of LLM — each bot gets its own personality, tools, and domain expertise, orchestrated through persistent channels with real memory.

Any LLM Provider

OpenAI, Anthropic, Gemini, Ollama, OpenRouter, LiteLLM, vLLM — mix and match providers per bot.

Self-Hosted

Your data stays on your hardware. No cloud dependencies, no data leaving your network.

Composable

Layer skills, capabilities, tools, and integrations onto any bot. Build once, reuse everywhere.

Built for orchestration at every layer.

Everything you need for autonomous AI agents.

Multi-Agent Fleet

Deploy specialized bots — each with its own model, persona, tools, and expertise. Bots delegate tasks to each other automatically.

Perfect for engineering teams with reviewers, QA, and leads.Capabilities

Composable expertise bundles — snap skills, tools, and behavioral instructions onto any bot at runtime.

Give any bot code-review, research, or PM expertise in one toggle. Explore Skills Hub →Persistent Memory

File-based memory plus self-learning skills. Bots write MEMORY.md, daily logs, and reference docs — and autonomously create reusable skills from solved problems.

Bots that get smarter with every conversation.Tasks & Scheduling

Heartbeats, recurring tasks, one-off deferred runs, cross-bot delegation — all managed by background workers.

DevOps monitors that check systems every hour automatically.Tool System

Local Python tools, MCP servers, and client-side actions. Tool RAG selects only relevant tools per query.

Bots that create slides, manage tasks, or query databases.Workflows

Multi-step automations with conditions, approval gates, and cross-bot coordination. Define in YAML, trigger from anywhere.

Content pipelines with draft, review, and approval steps.Channel Workspaces

Enable per-channel file stores with 17 built-in templates or create your own. Active files auto-injected into context, archives searchable, everything indexed.

Give each project channel its own structured context.Integrations

Slack, GitHub, Discord, Gmail, Frigate, ARR stack, and more. Build custom integrations with the plugin framework.

Connect to the services your team already uses. Browse Integrations →Docker Sandboxes

Isolated code execution in long-lived containers. Per-bot profiles, admin locking, configurable resources.

Bots that safely run and test code in isolation.Smart Retrieval

Hybrid BM25 + vector search, contextual retrieval, cross-encoder reranking — all with local models, zero API cost.

Large knowledge bases where dumping everything doesn't scale.

Works with any LLM provider. Mix and match per bot.

Ready to deploy your fleet?

Get started in minutes with Docker Compose.